Backup Bottlenecks #1: size does matter

Ever wondered why offload speeds vary from day to day, while your setup is the same?

Ever wondered why offload speeds vary from day to day, while your setup is the same?

When working with footage you generally move so much data that the size of the files starts to have as much of an impact on the overall speed as your disks, hubs and interconnects do.

Why copying 10000x 100 MB isn’t the same as copying 10x 100 GB.

Imagine a shipping container, filled with 100 refrigerators. Every fridge has its own shipping label, telling you its brand, model, weight, serial number, and more. You’re responsible for indexing them and storing them in another container.

So you set out to read all labels, copy the information into your ledger, and take all fridges out one by one. It takes a while, but at least everything is on wheels and the labels are all placed on eye-level, and you can easily move them into the next container.

The next container is filled with all kinds of small home appliances. Every box comes in a different size, and the labels are therefore on all over the boxes. There are 10000 boxes in the container, so it takes you at least 100 times longer, but probably even more, as you have to pick up every box, search for the label, write down the information, and carry it into the new container. Not very efficient, right?

Files work the same way: they are boxes with metadata like a name, file type, size, creation and modification dates, and permissions for reading and writing. To make matters worse you have t0 recreate all the labels. (There’s also stuff inside the boxes, but luckily electrons all weigh the same.)

Our shipping container is of course a hard disk, and its list of contents is the file system. Luckily, it doesn’t matter how many files you store onto it, or in what order. However, it does matter in terms of filling it up or emptying it: creating a list of contents, and looking for each file’s metadata costs time.

Network Transfers

Network disks behave much in the same way as local disks. However, due to the nature of the TCP/IP protocol the file size becomes an even bigger bottleneck. You can nowadays get a fast NAS with a lot of disks in a RAID setup, so the bottleneck will increasingly become the network protocol.

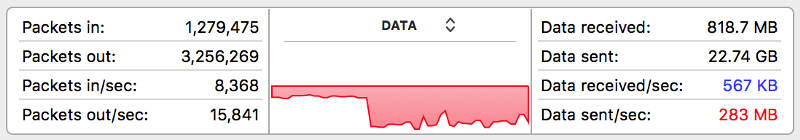

With the help of Hedge reseller The Future Store we moved a bunch of test data from a Mac Pro to a G-Technology G-RACK using AFP. It shows the relative inefficiency of moving small files across a 10GigE network:

Top speed is reached when moving large clips with an intermediate codec like ProRes or DNx, while the transfer is choked when moving a bunch of CinemaDNG files. Just the overhead of DNG files slows down the transfer by 60%. That doesn’t mean there’s anything wrong with DNG, on the contrary: it’s a very useful format. It just has implications for your workflow.

(The speeds reached with the G-RACK here are not to be considered a benchmark, in reality it’s a lot faster. AFP is the culprit here, you’re better off using protocols like NFS or SMB.)

File based versus block based

Shipping container measures are standardized, so it makes sense to use boxes that snugly fit inside. It’s the fastest way to make sure you use 100% of the space available, and unloading it becomes very easy: if you on beforehand now where a box will be you can take it out blindly. This is called block based copying, as opposed to file based copying.

This is where SAN typically enters the game: whereas copying files locally and with a regular network protocol is done on a per-file basis, copying data with a SAN protocol will only give you big blocks of data. A bit (no pun intended) more data than needed may be transferred, as not every file can be divided perfectly by the set block size. The sheer efficiency makes up for it in spades, though.

SAN is generally used with fiber connections, but it has a NAS brethren that works perfectly fine over regular copper ethernet: iSCSI. If you have a NAS, check if it supports this protocol and do some testing with it.

Thanks for reading for part 1! Next week in part 2: frames versus clips.

Liked this? Here’s a $10 coupon for Hedge, the fastest way to back up media.